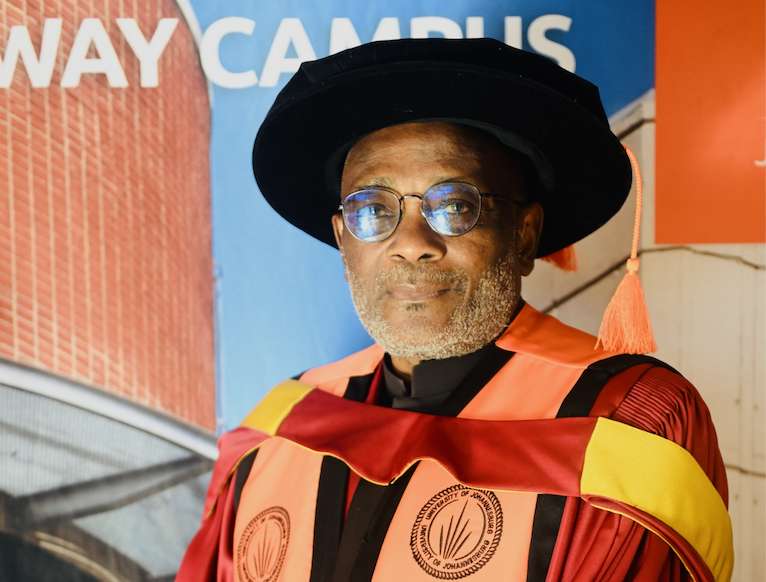

By: Bhaso Ndzendze

We do not know nearly enough about conflict and as a result it still ravages many pockets of the world today. But we do know some things about conflicts, and these insights and models have been very useful in stomping out fires, from Western Europe in the 1950s, to the Middle East (particularly between Israel and Egypt) in the 1970s and Africa in the 1990s. Though some tragic mishaps have also been registered. Nevertheless, we are in an era of great changes, none more so than in artificial intelligence. According to recent figures from a German-based research centre (Statista), in 2017 the global AI market grew by 150% from 2016 levels, attaining a US$4.8-billion. That similar growth trajectory is expected to shoot upwards of 152% for 2018 on a year-on-year basis, writes UJ’s Bhaso Ndzendze.

As it is predicted to affect every facet of life as we know it, the question remains: what effects will the wider adoption and implementation of AI have on the arena of human conflict?

On the face of it, the rise and implementation of AI in state-making is a net neutral; after all, machines are only capable of executing what their human operators instruct and program them to. Some however are pessimistic; indeed Albert Einstein himself, when reflecting on the terrifying capabilities brought on by the realisation of nuclear weaponry (a process he had helped bring about) exclaimed that “I know not with what weapons World War III will be fought, but World War IV will be fought with sticks and stones,” suggesting that a nuclear winter would result and thousands of years of human progress would be reversed. Perhaps there may be some truth to this. The scale of capabilities now in human hands would, in the materialisation of a global confrontation, reduce the few survivors to something of a Stone Age era, with few if any social institutions remaining in place. But it need not get to this level. When we look deeper into some of the promises held by AI, there are indicators that innovations in this area may lead to lessened conflict both within countries and between countries.

Technological advancement is both a symptom and a cause of human progress. For not only do we make machines, but machines in turn reconfigure our realities and our policies. This is also reflected in conflict. It was technological advancement which made the Second World War the most devastating in human history, but it was also technology which mitigated it. Not only did new innovations in weaponry result in greater capacity for destruction, but machine-based cryptanalysis, most prominently the Ultra decoder invented by Alan Turing (also the inventor of the computer), were used by the Allies to infiltrate and decode German war communications, so that they could know what the Nazis were planning before they executed it.

This touches on something at the heart of the existing models of conflict; the so-called information problem. This refers to the phenomenon whereby individual states, due to secrecy by all of them, are left to only estimating whether their would-be ‘enemy’ is in a state of readiness for conflict, and for how long they could sustain their war efforts if such a conflict broke out. This can be done through assessing the potential opponent’s war industries in areas such as arms manufacturing, as well as nuclear stockpiles in some cases. Short of espionage however, which can itself not be fully effective in the face of counterespionage, states can be in no position to fully know how ready the other state is for a confrontation, and vice versa. Unable to fully know the other’s capabilities (and therefore their chances at success or failure), states are sometimes driven by overconfidence in their own capabilities and go to war nonetheless. In the advent of AI, this problem can be solved. Machine computing is much abler than its human counterpart to generate calculations. At the same time, unlike human cognition which has limitations, machine computing can be improved upon. This is what has been taking place with the rise of quantum computing which allows data to exist in a multitude of states at the same time, and thus they have the ability to hold exponentially greater information and perform faster calculations.

Closing the information problem can therefore act as a deterrent. This was what contributed to the end of the Cold War standoff between the United States and the Soviet Union. President Ronald Raegan did an effective job at signalling American military supremacy and the breadth of the gap in US and Soviet military capabilities by letting it be known that Washington was embarking on a military programme to enable its military to make use of satellites to attack any country anywhere in the world. This program, ‘Star Wars’ as it came to be known, deterred any Soviet perceptions of equal strength with the US and led to its acceptance of US demands regarding the Berlin Wall, paving the way for a series of events which led to the dissolution of the USSR and an appearance of peace for some time.

Through AI, one state can be able to precisely calculate the military capabilities of its potential belligerent and in the process find out the actual levels of their would-be opponent’s readiness and potential for a sustained military effort. In this way at least, wars as a result of “bluffing” – initiated by relatively weaker states against relatively stronger ones – can be discounted and perhaps eventually gotten rid of. And in the incidence of conflict, AI could be used for precise location of enemy strategic/military targets and the sparing of civilians, abating the number of casualties.

There is the old adage, conjured by the German military thinker Carl von Clausewitz, that war is a continuation of politics by other means and that technology changes very little – the strategic concerns which are the causes of war remain unmoved; this is true to the extent that disagreement and contestation between states will hold or be exacerbated in the future. But regarding AI this prognosis has its limits and may actually be turned on its head. It being the case that the militarisation of AI is only one facet of its application, it stands to reason that its other uses – especially commercial ones – can trample the path towards militarism. Insofar as it requires constant innovation, AI requires constant knowledge and technological exchange among countries. AI largely remains a scientific pursuit and a business sector. In addition to this, no single country can build a computer or any form of technology on its own, and neither can it singularly consume it all by itself; thus profit considerations are a reliable force where politics may fail. There is always the need for foreign materials, cheap foreign labour in manufacturing, free and open sea routes for transportation, and foreign markets (as we have seen in the US trade war against China, the US is deeply reliant on Chinese technology and vice versa). Moreover, AI necessitates expert communities that transcend borders. Globally, in fact, about 38.6% of new patents in 2016 were filed by individuals who were not citizens of the country where their patents were filed.

Despite the headline grabbing trade war at the behest of the US, the general trend among states has been one of integration and opening up. Disproving earlier predictions of the disintegration of the EU in the wake of Brexit, the UK’s troubles have actually disinclined similar sentiments in other countries (most notably in France and Holland where pro-EU governments were elected and re-elected in 2017 respectively). In just the past year, the European Union has heightened trade deals with SADC, while also increasing its proximity with Japan (signing the biggest trade deal in history in July), and crucially getting closer with China – indeed one of the least understood phenomena today is the extent to which EU integration with the Chinese economy far surpasses that of the US with China, with the Belt and Road Initiative set to make these parties all the more integrated and therefore more likely to wish each other well, let alone declare wars of any kind on each other. This is a principal article of faith among international relations scholars and watchers of foreign affairs. Indeed in its original inception, the EU was meant to link the war industries of the European states so that they would be interdependent; this also defeated the information problem in that it peeled off the wall of secrecy such that the Germans and the French could be aware if one of them suddenly began a military build-up.

Some tough choices can be brought on by foreknowledge. British wartime Prime Minister Winston Churchill, for example, supposedly knew in advance of a pending German attack, but also knew that by acting pre-emptively Britain would give indication to the Germans that their encryptions had been solved and prompt them to initiate new encryption systems. Thus on the 14th and 15th of November in 1940, the town of Coventry was left open and vulnerable to air bombing by the Germans leading to massive casualties. There are also the realistic fears of AI with military capabilities ending up on the hands of terrorist organisations who do not have the same self-preservation rationales as states, and who may as a result not abide by the same rules as states. Indeed, while (according to US-based research firm Trictica) about 8 out of the top 10 applications of AI are non-military (including civilian machine and vehicular object detection, identification and avoidance; employee recruitment, and application in healthcare), the potential remains for military application of AI in such areas as localisation and mapping as well as visual recognition.

But just as well, these forms of AI can be useful in intercepting terrorist organisations, along with, just as important, predicting the probable future sites of terrorist attack and thereby allow states to fortify defences to protect people ahead of time, or intercept the attacks before they take place. Some optimism is warranted, and in order.

Bhaso Ndzendze is the author of the Beginner’s Dictionary of Contemporary International Relations.

This article was first published in the Mercury, 13 August 2018.

*The views expressed in the article is that of the author/s and does not necessarily reflect that of the University of Johannesburg