At a minimum, new technologies have played a complementary role in the decline of democracy, even if they have not caused it directly. The long-term effect of this is a question for the future, but it contains the ingredients for tech-based authoritarianism, writes Professor Tshilidzi Marwala and Dr Bhaso Ndzendze

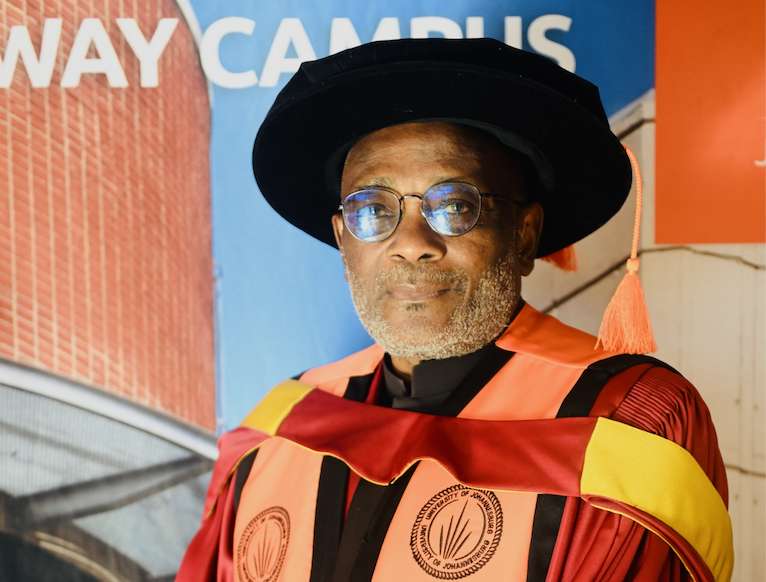

Prof Marwala is the Vice-Chancellor and Principal of the University of Johannesburg (UJ) and Dr Bhaso Ndzendze, lectures a postgraduate course on Technology Dynamics in International Relations at the University of Johannesburg and directs research in its Centre for Africa-China Studies. They recently penned an opinion piece published by Daily Maverick.

Artificial intelligence and emerging technologies are powerful tools – but can be bad for democracy – Prof Tshilidzi Marwala & Dr Bhaso Ndzendze (22 March 2021)

At a minimum, new technologies have played a complementary role in the decline of democracy, even if they have not caused it directly. The long-term effect of this is a question for the future, but it contains the ingredients for tech-based authoritarianism.

Human Rights Day marked the Sharpeville massacre of March 1960. It is meant to inspire reflection on one of the worst episodes in the wake of the apartheid system. One of the inevitable questions that arises is: “What made the system endure for so long?” One of the decisive factors in apartheid’s sustained life was the state-of-the-art photography and film products acquired from the Polaroid Corporation, which were put to use in surveillance, including in the production of the abhorrent “dompasse”, the passbooks forced on the African population. It was internal protestations by employees within the company’s own staff that set the call for a boycott of South Africa in motion.

There are many modern-day Polaroids; companies which, implicitly or otherwise, are complicit in the activities of repressive regimes. Technology and politics are both human-made inventions that are meant to be mechanisms for cooperation and the allocation of resources. But, evidently, they can be instruments of subjugation. How much they lead to the attainment of the former as opposed to the latter is a matter of debate. So too are the effects of the interaction between them.

With the advent and growing use of artificial intelligence (AI) and other emerging technologies in political processes, this is a debate worth engaging. There are challenges abounding from our point of view, but the benefits can still far outweigh the costs.

Simply put, AI refers to the vast number of programmed systems capable of conducting functions typically associated with the human brain, including analysis and prediction. Through its algorithms, AI can perform these tasks much more efficiently and at a much greater scale.

Moreover, through unsupervised learning, AI can accumulate growing capabilities. Throughout the world, AI has been used in areas with political implications, including use in political campaigns (including microtargeted advertisements and the more familiar robocalls), national defence programmes, blockchain for political fundraising as well as securing of ballots, among others.

Other indirect trends are to be seen in labour relations (AI as a threat to jobs), the potential of AI for discrimination (bias along the lines of gender and race), and its implications for manipulating and funnelling information.

Democracy and artificial intelligence appear to be having a negative correlation with one another. The more AI has become diffused, the fewer countries have qualified as free societies. Are these trends related?

At a minimum, technologies have played a complementary role in the decline of democracy, even if they have not caused it directly. Freedom House observes that there was democratic backsliding in every region in the 2010s. Last year marked the 15th consecutive year of decline in the number of countries which could be classified as “free”, a reversal of the trajectory that was seen from the early 1990s until 2005.

Today, fewer than 20% of the world live in countries that reach this classification. Covid-19 could have hardly helped matters. As the Economist Intelligence Unit (EUI) opens its 2020 report with the observation that “across the world in 2020, citizens experienced the biggest rollback of individual freedoms ever undertaken by governments during peacetime (and perhaps even in wartime)”.

Covid-19 has led to the rationale for greater surveillance and, with it, a growth in the ubiquity of emerging technologies. The long-term effect of this is a question for the future, but it contains the ingredients for tech-based authoritarianism.

Political scientists have already observed that technology has the effect of creating a small, decision-making elite composed of technocrats and governing officials. This requires citizen education.

There is also the risk of autocracies consolidating themselves by acquiring 21st-century technologies while operating under stifling environments politically. Noticeably, whereas coups were the main instrument of political change in the 20th century, the primary mechanism for transition to democracy in the 21st has been mass mobilisation and protest. From the Arab Spring to Burkina Faso, to Georgia and Kyrgyzstan, urban cellphone-wielding citizens have led the charge.

In sum, leaders used to fear coups, and now they fear protesters. Thus, with the recognition of technology’s role in lowering the costs of mobilisation, regimes have taken steps to deny access and sow division and confusion through the use of bots in online platforms. Evidently, it is working.

One of the first platforms to magnify the threat is a 2020 Foreign Affairs article by Andrea Kendall-Taylor, Erica Frantz and Joseph Wright just over a year ago. As they argue convincingly, the mere knowledge of possible digital surveillance makes citizens more likely to be passive. Many of the studies rightly focus on China’s growing role as a supplier of advanced technologies applicable to the governments of Cambodia, Russia, Uganda and Zimbabwe, among others (including state-of-the-art AI-based facial recognition).

In the past decade, China has accrued a sizeable slice of the AI market, with five out of the 10 largest AI patent holders being Chinese organisations and corporations (mainly the Chinese Academy of Sciences, State Grid Corp, Baidu, Tencent and Huawei).

Domestically, China’s “great firewall” and plans for a data-based social credit system are well known. But there is also a drawback to an American-driven debate – inevitably, its authors suffer from blind spots. Edward Snowden’s revelations tell us to be wary of the United States itself, which has used surveillance on its citizens (not to mention the rest of the globe) with apparent impunity and with technology companies as accomplices through backdoors in consumer devices and systems.

Principally, the excessive focus on China stands to lead to an incomplete analysis given Western companies’ market share, which has also become part of many countries’ communications infrastructure throughout the world and which hold most of the AI patents.

Another way AI may threaten democracy is through the indirect effects of automation and worker displacement. This has depressed the working class and diminished the middle classes (a vital force for democracy), and led them into the grasp of populist and anti-democratic politicians.

The advent of AI and other emerging technologies has also meant that our underlying social principles have been put under strain. One dilemma has been over the ethics of genetically enhanced technology (GET), an outcome of a convergence of insights in the biological and technological spheres (including the use of AI in genomics).

Numerous philosophers have advanced various arguments in the debate over the permissibility of these and their implications for liberal democracy. A view under discussion among ethicists is the notion that granting access to GET gives an unfair advantage and may create a “genetic caste system” that will perpetuate our current socioeconomic disparities.

In another sense, the inequality in technical expertise required to enter the labour market has given rise to a phenomenon some political economists are terming techno-feudalism. That is, in addition to the internet (and increasingly other industries) being dominated by only a handful of powerful, monopolistic corporations (termed Big Tech), our society increasingly runs the risk of having a class of individuals locked out of meaningful economic participation and upward mobility.

This speaks to the importance of common global standards and values to underlie the protocols on which these technologies are based so that it may be clear when some governments curtail them. A future referendum on whether to adopt some forms of advanced AI is also a possibility and would be justified given the societal and collective implications. Above all, the preponderance of emerging technologies in the political process requires a wary public. This begs the question of whether citizens are capable of understanding the threat.

Part of the problem is the technically complex nature of AI. Political scientists have already observed that technology has the effect of creating a small, decision-making elite composed of technocrats and governing officials. This requires citizen education. Civil society should play a role not only in understanding the threats but also the opportunities. An educated citizenry is also less vulnerable to baseless conspiracy theories.

New times indeed call for new types of leaders, but they also call for a new kind of citizen.

The views expressed in this article are that of the author/s and do not necessarily reflect that of the University of Johannesburg.